How to Integrate AI Tools Like ChatGPT into Classroom Learning

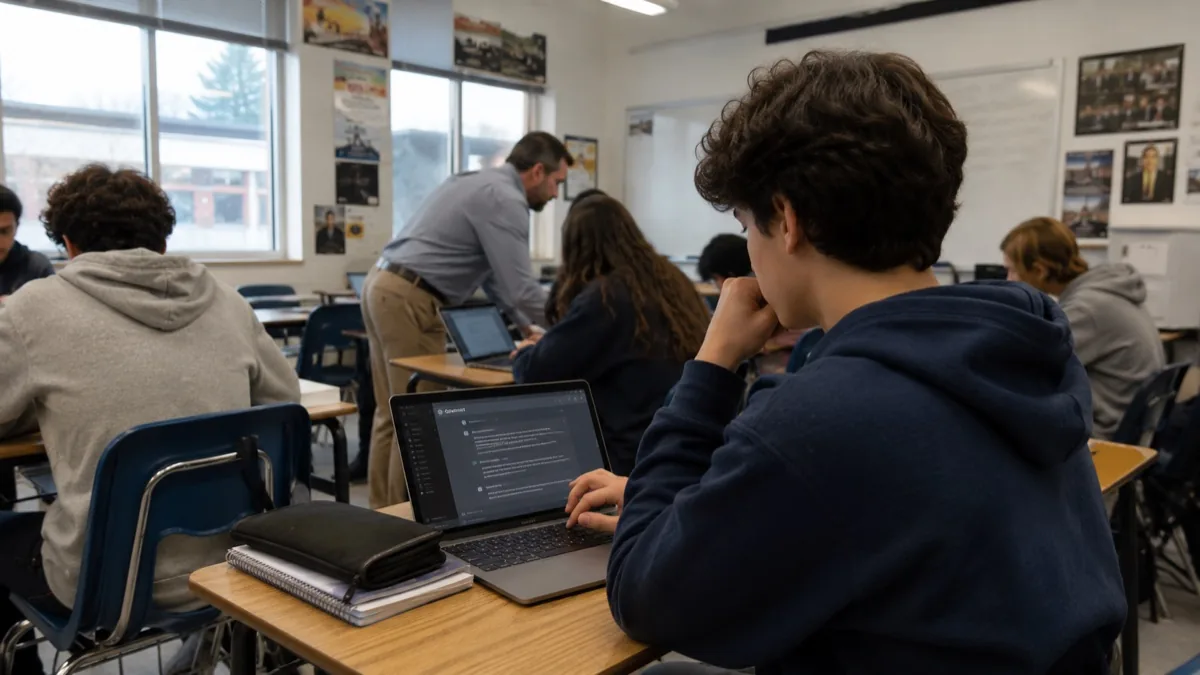

The first wave of school responses to ChatGPT — outright bans — has clearly failed. The second wave — pretending it doesn't exist and adjusting nothing — has also failed. The third wave, just beginning in 2026, is the only one that's working: deliberate integration with explicit guardrails, redesigned assessments, and honest conversations with students about which uses make them smarter and which make them dumber. This is the practical guide for teachers in that third wave, written from observations across U.S. K-12 and college classrooms over the 2024-2026 period.

The audience here is teachers who want to integrate AI tools into their teaching without becoming AI evangelists or AI panickers. The recommendations are calibrated — not maximalist, not minimalist, just what's actually working in classrooms with mixed-ability students and the usual range of academic-integrity pressures.

Why the bans failed (and what to learn from it)

New York City Public Schools' 2023 ChatGPT ban is the most-studied example of why bans don't work in this specific case. Three things happened during that ban that any teacher should know.

First, students used ChatGPT anyway, but only the ones with home internet and personal devices — the privileged half of the student body. Second, teachers couldn't tell the difference, because AI-detection tools have always been less reliable than the marketing claims. Third, the academic-integrity dynamic got worse, not better, because students who were using ChatGPT to "cheat" weren't getting any feedback on whether their use was actually unproductive. NYC quietly reversed the ban in May 2023 and most peer districts that followed have since moved to integration policies.

The lesson for individual teachers is the same as the lesson for districts. You can't put the technology back in the box. The choice is between students using it without your guidance, or students using it with your guidance. The latter produces better outcomes.

The four classroom uses that consistently work

Across the schools that have been integrating ChatGPT for two-plus semesters, four use patterns have emerged as durably useful.

Socratic tutoring on demand. ChatGPT (or Claude, or Gemini) used as a study partner that asks the student questions, walks them through problems, and provides instant feedback on their reasoning. This works particularly well for students who don't have access to private tutoring outside school hours and for subjects where additional practice with feedback is the bottleneck (math, foreign language, programming). The pattern that works: the student does the work, then prompts the AI to critique or extend it.

Differentiated reading materials. Teachers using ChatGPT to convert one source text into versions at different reading levels, with vocabulary lists and comprehension questions calibrated for each. This used to be hours of editorial work; AI collapses it to a five-minute prompt session. Tools like Diffit have built dedicated UIs for this, but plain ChatGPT works fine for teachers who just want it for occasional use.

Drafting assistance for student work. The most controversial pattern, but increasingly the most defensible. Teachers explicitly assigning ChatGPT-assisted drafting and asking students to disclose what was AI-generated, edit it substantially, and reflect on what they kept and changed. The reflection is the actual learning objective; the AI is the scaffold. Students learn editing and judgment skills that are more relevant to 2026's work environment than from-scratch composition skills are.

Feedback and rubric application. Teachers using ChatGPT to generate first-pass formative feedback on student drafts (with the teacher reviewing and editing before sending). The time savings here are substantial for teachers with 130+ students; the quality of feedback per student goes up because the teacher has time to add personalized commentary on top of AI-drafted observations.

The four patterns to avoid

Equally important: the four patterns where ChatGPT classroom use has consistently produced bad outcomes.

Replacing direct instruction with AI tutors. Schools that piloted "AI as primary instructor" for select courses saw dramatic engagement and completion drops. AI is supplementary; it's not yet a substitute for teacher-led instruction.

AI plagiarism detectors as primary evidence in academic-integrity cases. The detectors have documented false-positive rates of 15-30 percent for non-native English speakers and substantial false-negative rates against modern AI use. Districts and universities that have used detector scores as primary evidence have lost at appeal repeatedly. Use them, if at all, as one signal among many — and keep human-readable evidence (writing samples, proficiency-level baselines, in-class performance) primary.

AI-only formative assessment. ChatGPT is good at generating quiz questions but bad at generating consistent quiz questions across a unit. Auto-generated quiz banks tend to drift in difficulty and topic coverage, which makes them poor for tracking student progress over time.

Letting students use ChatGPT for math without showing work. This is the single most common failure mode in middle and high school. ChatGPT will produce the right answer (mostly) and the student will copy it. The skill loss is real and shows up in subsequent units. Math classrooms that integrate AI successfully require step-by-step work product even when the student used ChatGPT — and they evaluate the steps, not just the answer.

Redesigning assessments for the AI era

The single highest-impact change a teacher can make in 2026 is redesigning assessments to assume students have AI access. This isn't about defeating AI cheating; it's about teaching skills that matter in a world where AI is ambient.

Three patterns have emerged across teachers who've done this redesign well:

In-class oral defense of written work. Students complete written work outside class (with or without AI assistance), then defend it in class with follow-up questions that require genuine understanding. The grade weights toward the defense, not the writing. Students who used AI for thoughtless drafting can't defend it; students who used AI to scaffold genuine learning can.

Process documentation as the deliverable. Instead of "write a 500-word essay on X," the assignment is "build the process you used to research and write about X" — including AI prompts, drafts, critiques, and revisions. The process artifact is the deliverable; the final essay is a byproduct. This makes AI use a feature rather than a threat.

Source-restricted essay prompts. Essays that draw on specific in-class discussions, idiosyncratic teacher anecdotes, or recent local events are very difficult to AI-cheat well. Generic prompts that any AI can answer are easy to AI-cheat. Specific prompts shift the cost-benefit toward genuine engagement.

For more on the broader picture of what teachers can do beyond AI integration specifically, our companion guide to practical tips for teachers in the digital age covers the full digital-pedagogy stack. The wider context of how AI is reshaping education sits in our AI in education pillar.

Equity considerations that get overlooked

Three equity issues that thoughtful teachers should plan around:

Access asymmetry at home. Some students have ChatGPT Plus or family-shared Claude Pro accounts; some have only the free tier; some have no home internet. School-provided AI access (via Khanmigo or district-licensed ChatGPT for Edu) levels the field, but only when actually deployed. If your school doesn't have it, lobbying for it is one of the highest-leverage equity moves available right now.

Language barriers and AI use. AI tutoring tools work substantially better in English than in most other languages. ESL students benefit hugely from AI translation and writing support, but suffer disproportionately from AI-detection false positives. Teachers in classrooms with significant ESL populations should be especially skeptical of detector results.

Special education and AI accommodations. Students with reading disabilities, ADHD, and other learning differences often have IEPs that specifically allow technology accommodations. AI tools are increasingly within scope of those accommodations, but some teachers and proctors haven't caught up. Coordinate with the SPED team early.

Practical first-week implementation

For a teacher who's starting from zero and wants to integrate ChatGPT thoughtfully into their classroom in week one, three steps:

Day one: open conversation. Acknowledge that students are using AI, explicitly. Tell them you're not going to ban it but you are going to set rules. Explain which uses you consider productive and which you consider plagiarism. Ask them which uses they consider productive and which they consider plagiarism. The conversation itself is a teaching moment about academic integrity in 2026.

Days two through five: try it yourself. Use ChatGPT to draft a lesson plan, generate a quiz, level a reading passage, and produce formative feedback on a student paper. Notice what it's good at and where it falls flat. The hands-on familiarity gives you the credibility to set rules students will respect.

Week two: redesign one assessment. Take one upcoming assessment and redesign it on the assumption that students have AI access. Add an in-class defense, or a process documentation requirement, or a source-restriction. See how it goes. Adjust. Don't try to redesign every assessment at once; pick one per month and iterate.

Frequently Asked Questions

What's the right age for students to start using ChatGPT in school?

Most schools that have been thoughtful about this start age-appropriate AI use in middle school (grade 6+) and reserve direct ChatGPT access for high school. OpenAI's terms of service say 13+. Younger students benefit more from age-appropriate tools like Khanmigo (which has guardrails for younger users) than from raw ChatGPT.

Should I let students cite ChatGPT in their bibliography?

Yes, with structure. Citing AI as a source teaches students to distinguish AI output from human-authored sources, and to evaluate AI output for accuracy. Most major style guides (APA, MLA, Chicago) now have specific guidance for AI citation.

What if a student uses ChatGPT for a take-home essay and I can't tell?

You probably can't reliably tell from the writing alone. The defensible move is to design assessments where it doesn't matter — combine take-home writing with in-class oral defense, process documentation, or follow-up questions. The students who genuinely engaged can answer; the students who didn't can't.

Is it ethical to use ChatGPT to grade papers?

Generating a first-pass set of comments that a human teacher reviews and edits is fine. Using AI as the sole grader of substantive student work is not. The middle ground — AI-drafted feedback, teacher-finalized — is where time savings come from without abandoning the teacher's pedagogical role.

How do I keep up as the AI tools keep changing?

Pick one or two tools and use them deeply rather than chasing every new release. The marginal value of switching from ChatGPT to Claude (or vice versa) is small; the marginal value of using either deeply enough to know its real strengths is large. Subscribe to one EdTech newsletter for occasional context, but don't try to track everything.

The bottom line

Integrating ChatGPT into the classroom is no longer optional in 2026 — students are using it whether teachers permit it or not. The question is whether teachers integrate it thoughtfully, with guardrails and pedagogical clarity, or refuse to engage and let students figure it out without guidance.

The teachers who've done this well report better student outcomes, less academic-integrity drama, and more time for the work that matters most — relationship-building, motivation, and the kind of judgment-development that AI cannot do for them. Start with one tool, one redesigned assessment, and one honest conversation. Iterate from there.