Google DeepMind Unveils Gemini Robotics-ER 1.6 With Breakthrough Spatial and Physical Reasoning

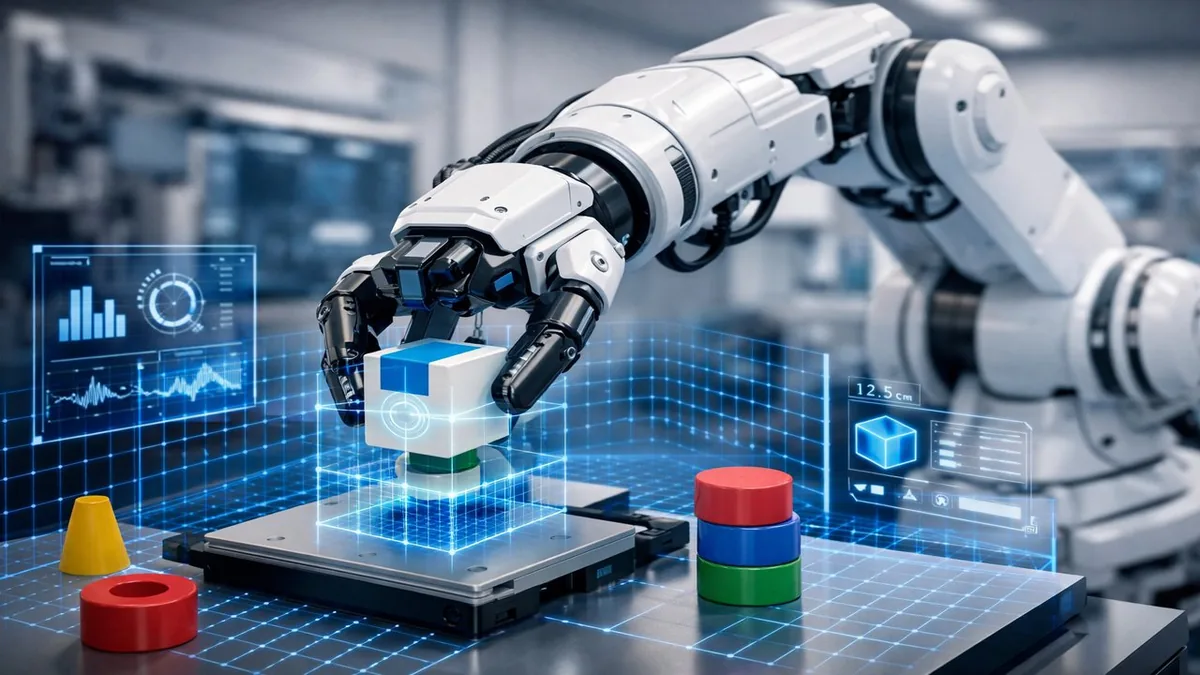

Google DeepMind has unveiled Gemini Robotics-ER 1.6, an updated model for physical robots that the company says achieves significant breakthroughs in spatial and physical reasoning. The release marks another step in DeepMind's push to bring frontier AI capabilities from digital environments into the physical world.

What Gemini Robotics-ER 1.6 Does Differently

The "ER" in the model name stands for Embodied Reasoning — a design philosophy that trains AI systems not just to process language and images, but to understand the physical constraints of real-world manipulation tasks. Gemini Robotics-ER 1.6 can reason about object permanence, spatial relationships, forces, and the consequences of physical actions in ways that previous versions could not reliably do.

In benchmarks published by DeepMind, the model shows marked improvements in tasks requiring precise manipulation — such as picking objects of irregular shapes, stacking items with physical stability constraints, and navigating cluttered environments where not everything is visible at once.

The Spatial Reasoning Breakthrough

Spatial reasoning has been a persistent weak point for AI systems trained primarily on text and 2D image data. Understanding that a cup can't be placed on a sloped surface without falling, or that inserting one object into another requires precise angle and force, requires a fundamentally different kind of world model than recognizing a cat in a photo.

DeepMind's approach combines simulation-based training at scale with data from physical robot interactions, allowing the model to develop intuitions about physics that generalize across different robot hardware and real-world environments. This generalization is what separates a useful robotics model from one that only works in controlled lab conditions.

Commercial and Industrial Implications

The applications are substantial: warehouse automation, surgical assistance, manufacturing quality control, and household robotics all depend on reliable spatial reasoning and precise manipulation. Current industrial robots are fast and precise within narrow programmed parameters, but they break down immediately when confronted with variability. AI-driven robots like those running Gemini Robotics models could handle the long tail of exceptions that rigid automation cannot.

DeepMind has partnerships with several robotics hardware companies and is positioning Gemini Robotics models as a platform layer — the intelligence that different robot bodies can plug into, similar to how Android unified different smartphone hardware.

The Competition Is Heating Up

DeepMind's release comes as a race to build capable embodied AI heats up. OpenAI has invested in Figure AI, Amazon's Astro team is working on home robots, and several well-funded startups including 1X Technologies and Physical Intelligence are pursuing general-purpose robot AI. The company that establishes the dominant robotics foundation model could capture enormous value as physical automation accelerates across every industry.

The Bottom Line

Gemini Robotics-ER 1.6 isn't a consumer product yet — it's a research and platform milestone. But the spatial reasoning improvements it demonstrates bring the vision of truly general-purpose robots meaningfully closer. DeepMind is making a credible case that AI that understands physics is coming, and that it intends to lead that wave.

Related Articles

- OpenAI Updates Agents SDK With Native Sandboxing

- ByteDance Launches Seedance 2.0 Video Model to 100+ Countries

- Uber Is on Track to Spend $7.5B+ on Robotaxis