The Last Mile of Generative AI: Why the Future of Content is Edit-First

The promise of generative AI for content teams has always been speed, but the reality often involves a frustrating "uncanny valley" at the finish line. You prompt a high-end model for a specific editorial hero image—perhaps a futuristic workspace or a specialized medical laboratory—and the result is 90% perfect. However, that remaining 10% is a disaster: a stray sixth finger on a hand, a nonsensical piece of text on a wall, or a background element that violates brand safety guidelines.

In a professional publishing environment, 90% is essentially 0%. You cannot hit "publish" on an asset that features anatomical anomalies or visual artifacts that signal "cheap AI." This is the "last mile" of content production, where the friction between a raw generation and a finished asset usually grinds a workflow to a halt. The solution isn't necessarily better prompting; it is the shift from a generation-first mindset to an edit-first mindset.

The Disconnect Between Prompting and Publishing

The editorial industry initially fell into the trap of the "perfect prompt." The theory was that if you could just find the right combination of tokens and weights, the AI would output a ready-to-use file. For high-velocity content teams, this is a fallacy. Experimenting with fifty variations of a prompt to fix a single lighting issue is a massive drain on resources.

In a traditional workflow, a designer would simply retouch the error in seconds. In an AI-native workflow, we are seeing a return to this logic. The true value for content teams is no longer found in the initial text-to-image "magic trick," but in the surgical capabilities of an AI Photo Editor that can refine, upscale, and format assets for specific platform requirements. We are moving from "prompt engineering" to "asset refinement" as the core skill set for modern creators.

The Role of the Editor in the Multi-Modal Stack

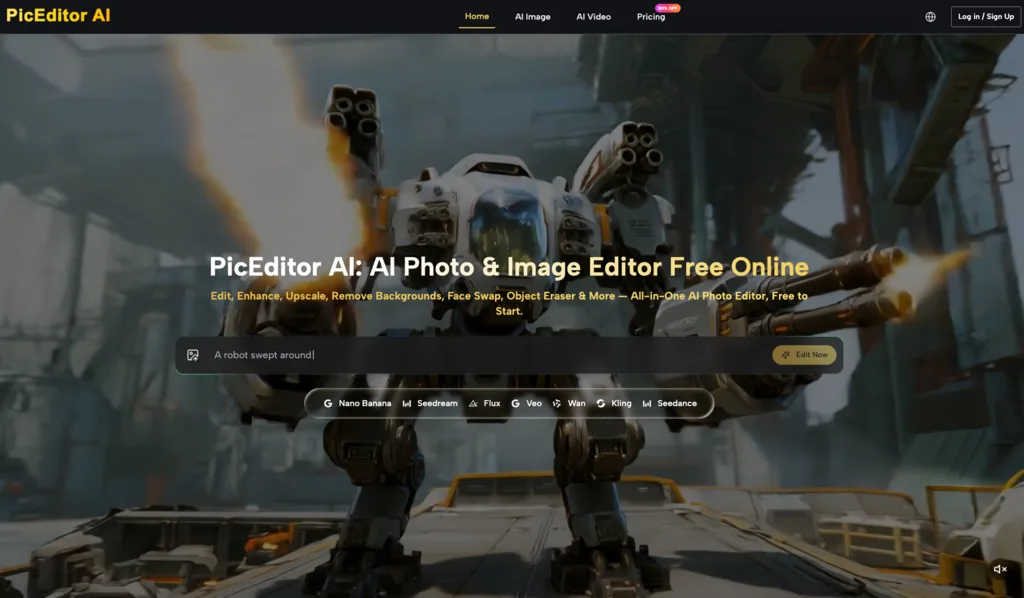

Most professional creators aren't loyal to a single model. They might use Flux for its realism, Nano Banana for specific stylized outputs, or Seedream for conceptual sketches. This fragmented landscape creates "tool fatigue." Jumping between a standalone generation interface and heavy desktop design software creates a bottleneck.

A consolidated AI Image Editor acts as a necessary bridge. By housing various generative models—like Google Veo for video or Kling for motion—alongside surgical tools like background removal and object erasers, the workflow becomes fluid. Instead of exporting a file, importing it elsewhere, and re-exporting it, a creator can generate the base layer and immediately begin the "last mile" tasks:

-

Background Removal: Isolating a generated subject for use in a multi-layered CMS layout.

-

Object Eraser: Removing the inevitable "AI hallucinations" like floating debris or nonsensical artifacts.

-

Upscaling: Converting a 1024x1024 generation into a high-resolution file suitable for 4K displays or print headers without losing texture.

The Iterative Loop: Moving Beyond Zero-Shot Prompting

The "zero-shot" approach—asking the AI for a final result in one go—is increasingly being replaced by image-to-image workflows. For content teams, this provides a level of control that text prompts simply cannot match. If you have a specific compositional layout required by your website's CSS, you can provide a rough sketch or a low-fi stock photo and use an AI Image Editor to transform it into a brand-consistent asset.

This iterative loop is especially critical for maintaining visual consistency across a series of blog posts or social media assets. Using a "face swap" tool, for instance, allows a brand to maintain the same "character" or brand ambassador across different scenes and contexts without the expense of multiple photo shoots. It’s about taking a static, singular output and turning it into a flexible, reusable asset pipeline.

Technical Constraints and the Limits of "Good Enough"

It is important to maintain a level of skepticism about the current state of these tools. While the progress is rapid, AI Photo Editor technology still faces significant hurdles that require human intervention.

One notable limitation is the "hex code drift." If your brand requires a very specific Pantone or hex code, generative models often struggle to maintain color accuracy across different lighting conditions within the image. You might prompt for "corporate blue," but the AI will interpret that based on the scene's shadows, often requiring a manual color grade afterward to ensure it matches the brand book.

Furthermore, text rendering remains an area of uncertainty. While models like Flux have improved significantly, they still fail on complex signage or fine print. A creator should never assume a generated sign is accurate; it almost always requires a secondary pass for legibility. There is also the issue of resolution "hallucination." When upscaling an image by 4x, the AI essentially guesses what the missing pixels should look like. In some cases, this can lead to a "plastic" or over-sharpened look that degrades the professional feel of the image. Knowing when to stop the AI and move to a vector-based fix is a judgment call that currently only a human can make.

Systems Thinking: Building a Repeatable Asset Pipeline

For a Creative Operations Lead, the goal isn't just to make one good image; it’s to build a system that can produce a thousand. This requires standardized processing steps. A repeatable pipeline might look like this:

-

Generation: Using a model like Nano Banana to create the core concept.

-

Surgical Editing: Using an AI Photo Editor to remove artifacts and refine composition.

-

Enhancement: Applying AI-driven upscaling to meet technical delivery specs.

-

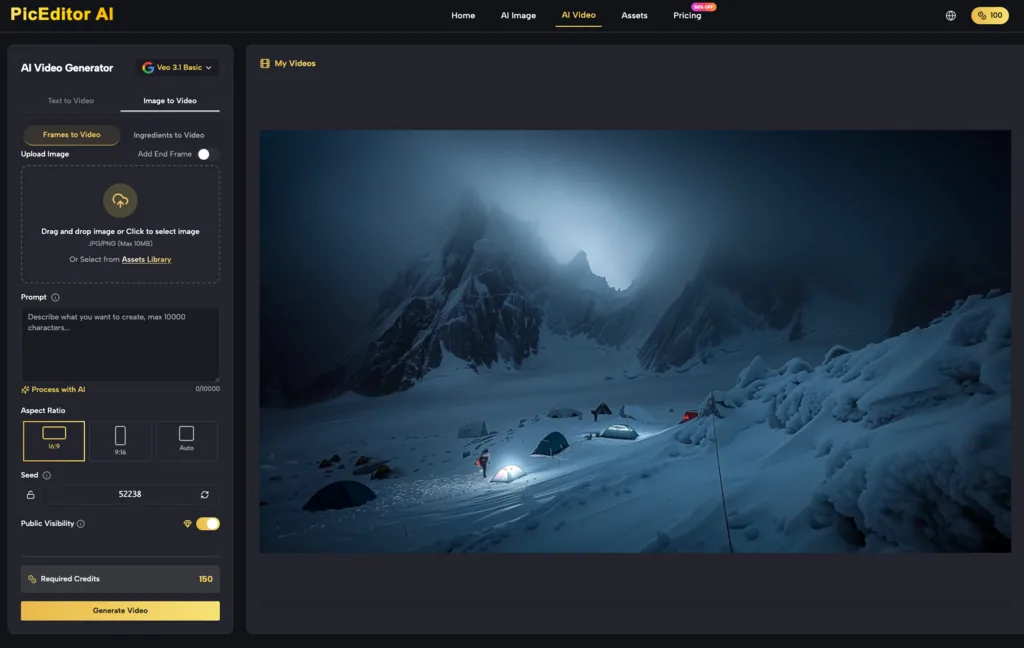

Motion Integration: Passing the refined static image into a tool like Kling or Veo for subtle animation.

This system is significantly more cost-effective than traditional stock image licensing, which often results in generic, overused visuals. However, the ROI of AI-integrated workflows is only realized if the editing layer is fast. If the editing takes longer than a traditional Photoshop session, the efficiency of the AI generation is moot. The focus must be on tools that offer "low-latency" editing—features that work in a browser and don't require high-end hardware.

The Convergence of Image and Motion

As we look toward the next year of content production, the line between static images and video is blurring. A high-quality image is no longer the end product; it is often the "seed" for a video generation. A refined image, cleaned of all artifacts in an AI Image Editor, serves as a much more stable source for photo animation.

If the source image has a visual glitch, that glitch will be magnified and "animated" in a video tool, leading to a catastrophic failure in the final video output. This makes the precision of the initial photo edit even more critical.

However, there is a looming risk of "visual homogenization." As more teams use the same baseline generative models, the internet is becoming saturated with a specific "AI aesthetic." To stand out, content teams must use their editing tools not just to fix errors, but to inject brand-specific personality. The competitive advantage is no longer having access to AI—everyone has that—but in the precision and creativity of the editing layer.

Conclusion: Precision as a Competitive Advantage

The "last mile" of editorial production is where professional work is distinguished from amateur experiments. While it is tempting to focus on the power of the generation engines, the actual utility for a functioning content team lies in the refinement tools.

Whether it is removing a distracting background element, upscaling for a high-res display, or swapping a face for localized marketing, the AI Photo Editor is the tool that makes generative media publishable. The future of content isn't just about what you can generate; it’s about what you have the discipline to refine. Human editorial oversight, supported by surgical AI tools, remains the only way to ensure that the final asset is not just "AI-generated," but truly "brand-ready."