OpenAI Released an Open-Source Tool That Automatically Redacts Your Private Data

OpenAI quietly released an open-source privacy filter that automatically detects and redacts personally identifiable information (PII) from text datasets. At 50 million parameters, it is small enough to run efficiently in enterprise pipelines — and it is free. This is a genuinely useful tool that addresses one of the biggest practical blockers to enterprise AI adoption.

What's Actually Happening

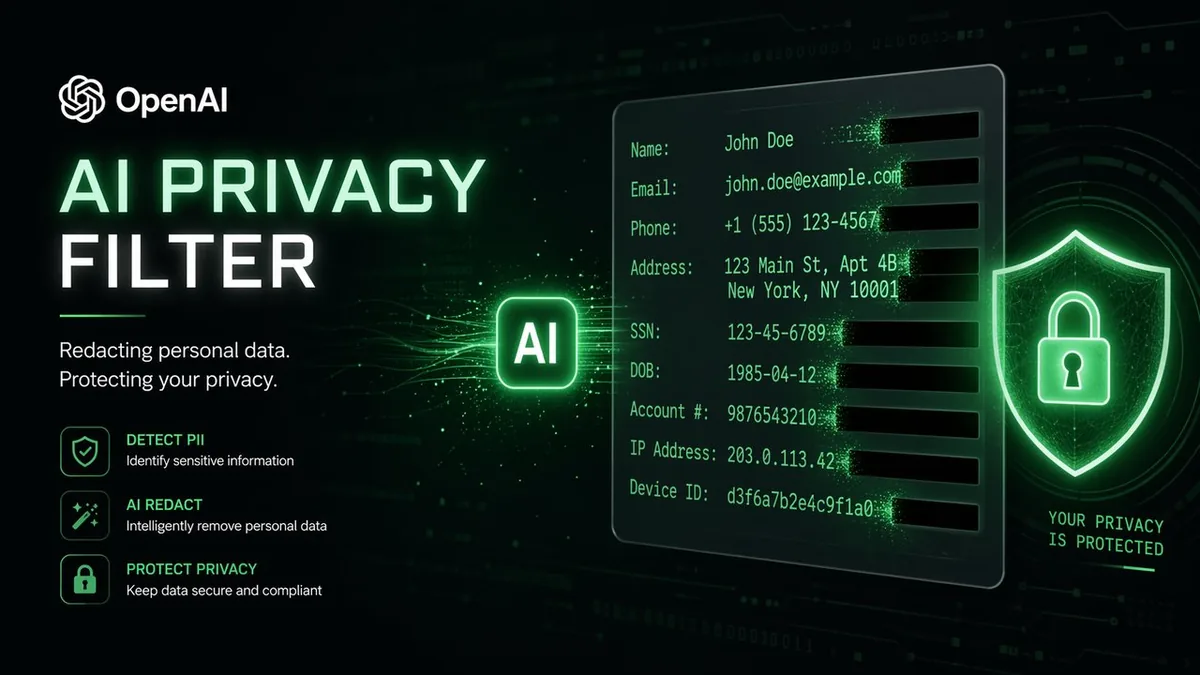

The model scans text for PII — names, email addresses, phone numbers, social security numbers, financial account numbers, addresses, and other identifying data — and redacts it automatically before the text is processed by larger AI models. It operates at 50M parameters, meaning it is fast, cheap to run, and does not require enterprise-scale infrastructure to deploy.

OpenAI released it as open source, meaning companies can deploy it on their own infrastructure without sending data to OpenAI's servers. For regulated industries, that on-premises deployment option is not a nice-to-have — it is a compliance requirement.

Why It Matters

One of the most common blockers to enterprise AI adoption is the question: "What happens to our sensitive data?" Legal, HR, finance, and healthcare teams generate documents full of PII. Feeding those documents to AI models without sanitization creates real compliance risk under GDPR, HIPAA, and similar frameworks.

A fast, accurate, open-source PII filter removes that blocker. Companies can now sanitize datasets before processing, dramatically reducing the legal surface area of AI deployments. This is especially significant for companies that want to use ChatGPT Enterprise or similar tools with real customer data. Related: OpenAI's workspace agents become more deployable with this kind of privacy infrastructure in place.

My Take

The interesting strategic move here is the open-source release. OpenAI could have kept this as a proprietary feature of ChatGPT Enterprise. Instead, they are giving it away — which builds trust with enterprise security teams and makes the entire OpenAI ecosystem more deployable. Every company that deploys this filter becomes more likely to also use OpenAI's paid products for the AI tasks downstream.

The 50M parameter size is the right call. A massive model for PII detection would be slow and expensive. This is purpose-built for throughput, not complexity — exactly what production pipelines need.

Frequently Asked Questions

What types of PII does it detect? Names, emails, phone numbers, addresses, SSNs, financial identifiers, and other standard PII categories.

Can it be run on-premises? Yes — it is open source and deployable on private infrastructure, critical for regulated industries.

How accurate is it? OpenAI has not published detailed benchmark numbers, but 50M parameters is sufficient for high-accuracy classification tasks.