OpenAI Launches Chronicle: A New AI Feature Combining Codex, Memory, and Search

OpenAI has introduced Chronicle, a new AI capability that combines the code-generation power of Codex with persistent memory and real-time search. The feature is designed to give developers and power users a more context-aware, long-horizon AI assistant that can track projects, recall past interactions, and pull in current information.

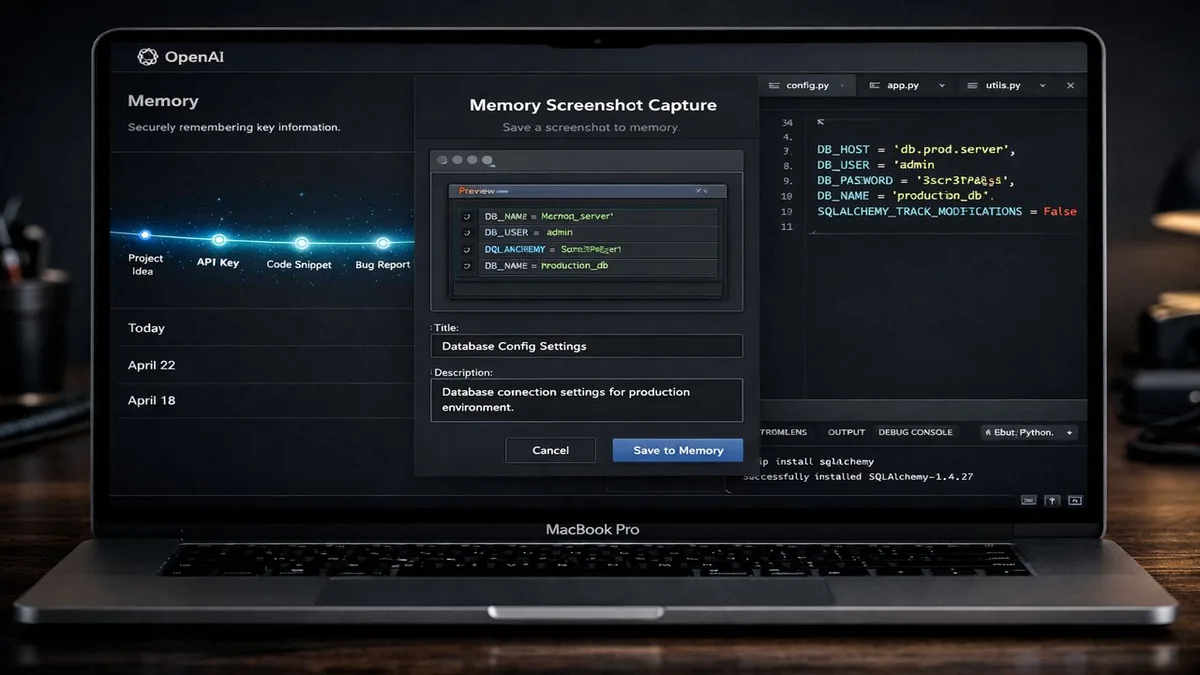

What Chronicle Does

Chronicle integrates three key capabilities into a unified workflow: Codex for code generation and execution, Memory for retaining user context across sessions, and Search for pulling in live web data. Together, they enable ChatGPT to maintain an ongoing understanding of a user's project — remembering decisions, tracking progress, and referencing current documentation or news without requiring users to re-explain context each time.

Why It Matters for Developers

For software developers, Chronicle addresses a core limitation of current AI assistants — the lack of persistent project context. A developer working on a long-running codebase can now expect ChatGPT to remember architecture decisions from previous sessions, reference the latest library documentation, and generate code that stays consistent with established patterns without manual prompting.

Memory and Privacy Controls

OpenAI says users have full control over what Chronicle remembers. The memory system includes explicit controls to view, edit, or delete stored information, and organizations deploying ChatGPT for Enterprise can configure memory policies at the workspace level. The feature builds on OpenAI's earlier memory rollout but extends it with project-level context and code awareness.

Availability

Chronicle is rolling out initially to ChatGPT Plus and Pro subscribers, with Enterprise and Team plan availability expected shortly after. OpenAI has not announced a free tier rollout date, noting that the feature's resource requirements currently limit its broader availability.

The Bottom Line

OpenAI's Chronicle marks a meaningful step toward AI assistants that work alongside developers as genuine long-term collaborators rather than one-shot tools. By combining memory, code execution, and search, it addresses the context continuity gap that has been a persistent limitation of LLM-based assistants.