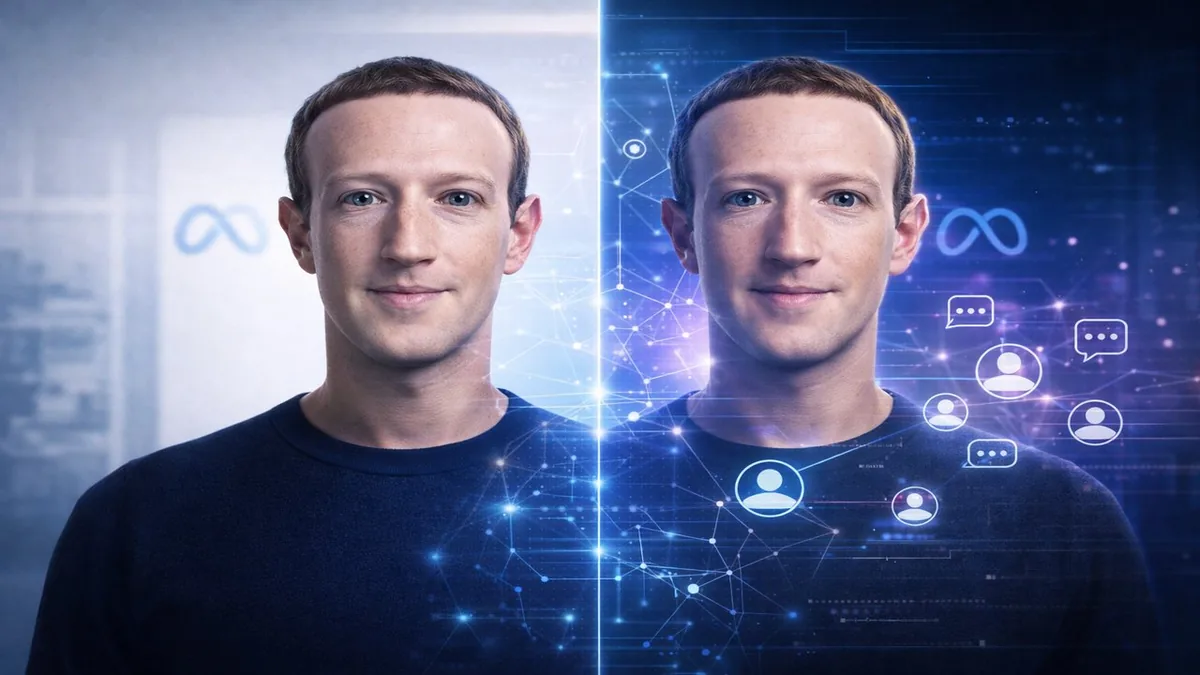

Meta Is Building a Photorealistic AI Version of Mark Zuckerberg for Employee Feedback

Meta is developing photorealistic AI characters modeled on real people — including a digital version of Mark Zuckerberg that employees can interact with to provide feedback to the CEO, the Financial Times reported. The project extends Meta's AI character work beyond entertainment into internal corporate applications, creating an AI proxy for executive interaction that the company believes could improve the quality and volume of feedback its leadership receives. The initiative is part of a broader Meta push into synthetic human characters that raises significant questions about consent, authenticity, and the future of human-to-human interaction inside large organizations — and connects to Meta's broader AI model development under Muse Spark and its Superintelligence Labs.

AI Characters for Employee Feedback

The concept behind the Zuckerberg AI character is straightforward: employees who might hesitate to give candid feedback directly to the CEO — or who lack access — can interact with a photorealistic AI version of him trained on his communication style, stated views, and decision-making patterns. The AI character would respond as Zuckerberg might, potentially allowing employees to test ideas, raise concerns, or explore hypothetical scenarios in a lower-stakes environment than a direct executive interaction.

The corporate applications of this technology extend beyond CEO feedback. An AI character modeled on a senior manager could allow direct reports to practice difficult conversations. A character trained on a domain expert could distribute their knowledge to teams that lack direct access. The technology effectively creates scalable proxies for human expertise and authority within organizations — a fundamentally different use of AI characters than entertainment or social media applications.

The Consent and Authenticity Questions

Meta's AI character development raises questions that the company will need to address as these tools move beyond internal pilots. When an AI character is modeled on a real person, the character's responses carry the implied authority and identity of that person — even when the responses are generated by a model rather than the actual individual. If the AI Zuckerberg character gives an employee guidance that diverges from what the real Zuckerberg would actually say, the employee may not know. The line between "AI trained to be like Zuckerberg" and "Zuckerberg" becomes difficult to maintain in practice, particularly when the character is photorealistic. These consent and authenticity challenges will intensify as the technology is deployed more broadly.

Frequently Asked Questions

What is Meta building with AI characters?

Meta is developing photorealistic AI characters modeled on real people, including a digital version of Mark Zuckerberg designed to allow employees to provide feedback to an AI proxy of the CEO. The technology is part of a broader Meta initiative into synthetic human characters.

Why would a company use an AI version of its CEO?

An AI character modeled on a CEO could allow employees to interact with a scalable proxy for executive communication — giving feedback, exploring decisions, or raising concerns in a lower-stakes environment than direct access. It also allows leadership to distribute their communication style and priorities at scale.

What are the risks of AI characters modeled on real people?

Key risks include authenticity gaps — where the AI character's responses diverge from what the real person would actually say — consent concerns about how a person's identity and communication style are used, and authority confusion when employees treat AI-generated responses as authoritative statements from the actual person.

The Bottom Line

Meta building a photorealistic AI version of its CEO for employee feedback is a genuinely novel use of AI character technology — and a preview of how synthetic humans may reshape organizational communication. The technology works in both directions: it makes leadership more accessible at scale, and it creates a layer of AI mediation between employees and actual decision-makers. Whether that mediation improves organizational communication or degrades it depends on how transparently and accurately the AI character represents the real person. Meta is in the unusual position of being both the developer of this technology and one of its first institutional test subjects.