Iterative Velocity: Shrinking Launch Asset Production to Real-Time Cycles

The traditional lifecycle of a product launch asset is defined by friction. It begins with a creative brief, moves into a multi-day design phase, and inevitably hits a wall during the review cycle. For product teams, this linear progression is the primary bottleneck. When the marketing requirements change or a stakeholder asks for a "slight adjustment" to a hero image, the timeline resets. This delay isn't just an inconvenience; it is a cost. In a market where speed-to-market is a competitive advantage, the inability to iterate in real-time is a liability.

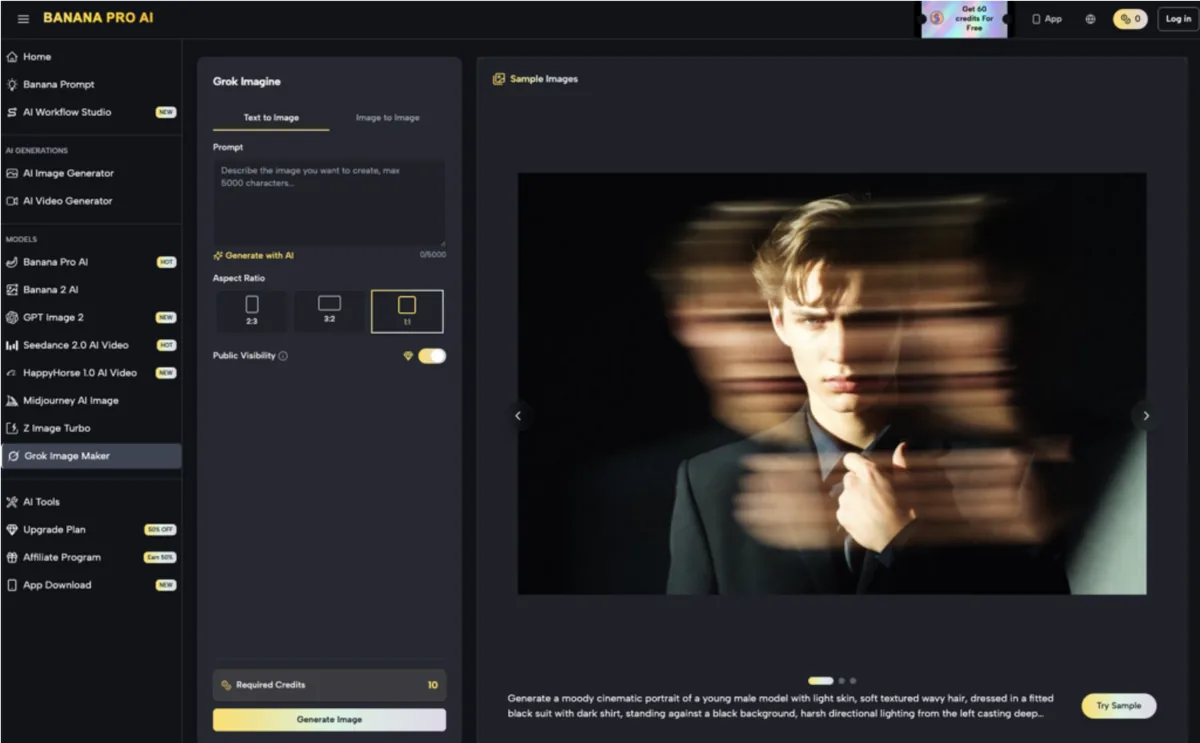

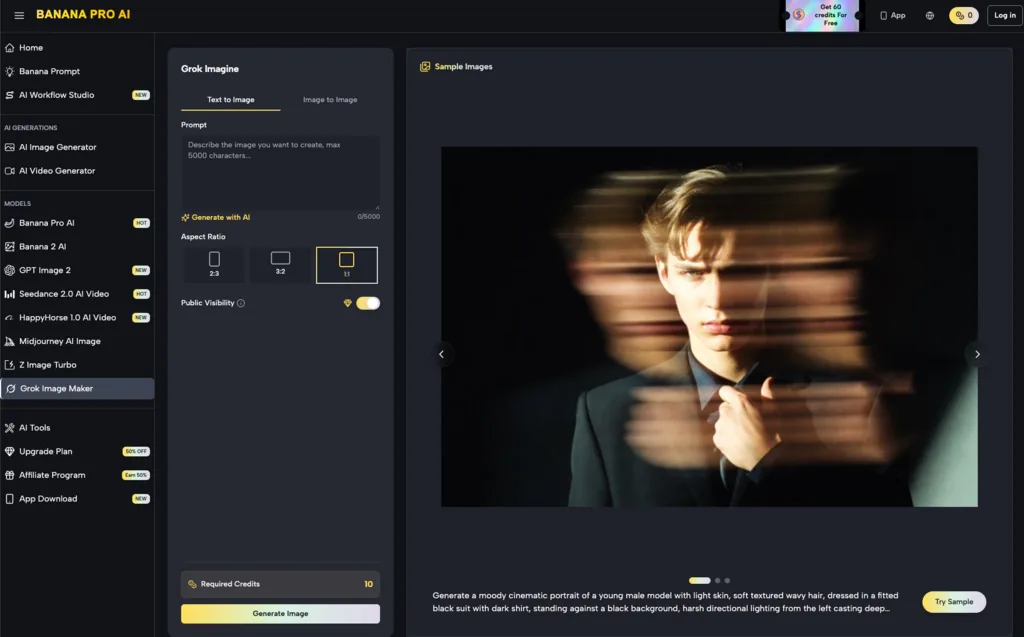

The emergence of creative operations powered by generative models is fundamentally changing this math. We are moving away from a world of "static delivery" toward a paradigm of "iterative velocity." By integrating tools like the AI Image Editor into the core production pipeline, teams are finding that the distance between a concept and a production-ready asset is shrinking from days to minutes.

The High Cost of Static Production Pipelines

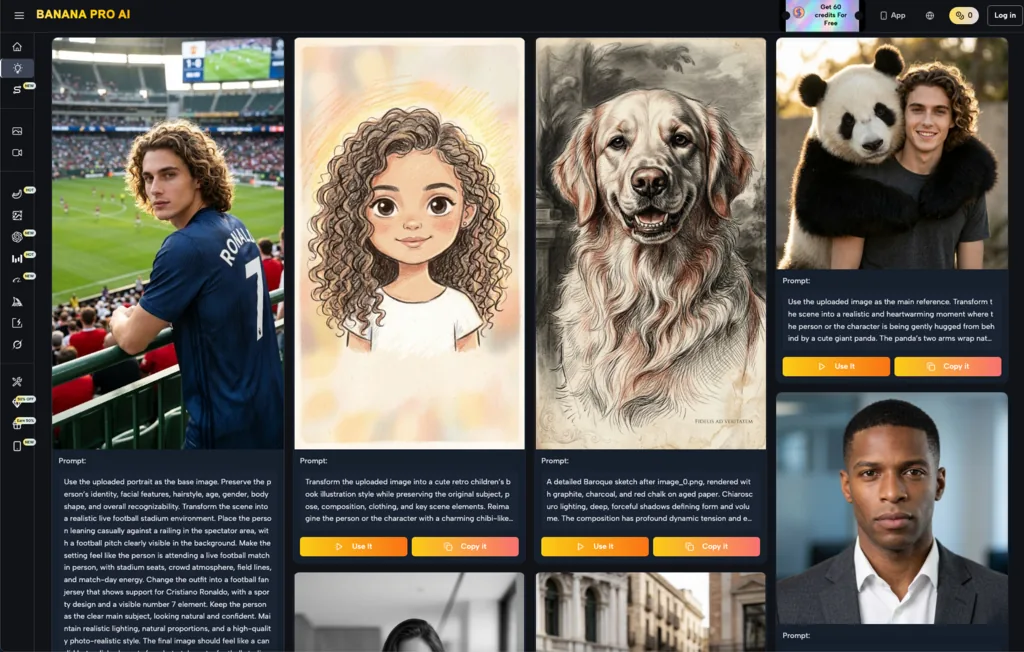

In most organizations, asset creation is treated as a siloed transaction. A product team requests an image; a design team produces it. This works well for high-stakes, long-lead brand campaigns, but it fails for the high-volume needs of modern growth marketing and product launches. When you need twenty variations of a landing page hero to test different demographics, the traditional model breaks.

The friction is most visible during the feedback loop. A designer provides a draft, the product lead suggests a change in lighting or composition, and the designer goes back to the software for another four-hour block. This back-and-forth accounts for roughly 60% of the total time spent on creative production. The goal of using Banana Pro isn't just to generate "more" content, but to eliminate the dead time between feedback and the revised asset.

Redefining the Brief-to-Asset Pipeline

The shift toward iterative velocity requires a change in how teams approach the creative brief. Instead of a rigid set of instructions, the brief becomes a starting point for exploration. When using a model like Nano Banana, the initial generation serves as a "visual prototype."

This prototyping phase allows product teams to see their ideas manifested instantly. If the composition is off or the vibe doesn't match the brand's aesthetic, they don't wait for a second meeting. They adjust the parameters—the lighting, the lens depth, or the color palette—and regenerate immediately. This creates a tight feedback loop where the creative team and the product team can align on a direction in a single thirty-minute session rather than over a week of emails.

From Generative Chaos to Controlled Output

One of the common critiques of AI-generated visuals is the lack of control. Early tools were notorious for producing "happy accidents" that were difficult to replicate or refine. However, professional-grade workflows have matured. The current focus is on "guided generation," where the user maintains strict control over the final output.

For instance, when a team is working with Nano Banana Pro, they aren't just rolling the dice on a prompt. They are using structural references and style weights to ensure the output adheres to established brand guidelines. This level of control is what makes the technology viable for enterprise-level product launches where "close enough" is not an acceptable standard.

The Canvas Workflow: Real-Time Refinement

The real breakthrough in production velocity isn't the generation of the image itself, but the ability to edit it within a fluid workspace. The AI Image Editor has replaced the need to jump between multiple heavyweight design programs for basic modifications.

In a standard workflow, if a generated image is 90% perfect but has an unwanted object in the background, a designer would traditionally have to mask it out manually. With contemporary canvas-based workflows, the process is handled through in-painting. The user selects the area, describes the replacement, and the tool blends the new pixels seamlessly into the existing environment.

This "image-to-image" evolution is critical. It allows teams to take a low-fidelity sketch or a basic 3D render and upscale it into a high-fidelity marketing asset without losing the original intent. The Banana AI ecosystem facilitates this by keeping the generation and editing tools within the same interface, reducing the cognitive load and technical overhead of switching formats.

Addressing the Review Cycle Bottleneck

The review cycle is where velocity usually dies. Stakeholders often struggle to give constructive feedback on abstract concepts. They need to see the visual to know what they don't like.

By shifting to a real-time production model, the review session becomes the production session. Instead of a "reveal" meeting where a designer shows finished work, the team can use a live workshop format. Using Banana Pro to make adjustments on the fly—changing a background from an office setting to a home environment, for example—allows stakeholders to see the impact of their feedback instantly.

The Uncertainty of 'Good Enough'

It is important to acknowledge a significant limitation in this new workflow: the "uncanny valley" of quality. While AI tools can generate stunning visuals, they often struggle with specific technical details, such as accurate text rendering within an image or complex anatomical proportions in busy scenes.

Product teams must be cautious. There is a temptation to accept an asset because it was produced quickly, even if it contains subtle artifacts that might undermine brand trust. At this stage of the technology, a human "quality controller" is still a non-negotiable part of the pipeline. The velocity gains should be spent on more iterations, not on rushing flawed work to production.

Bridging Image to Video with Nano Banana Pro

The demand for video content in product launches has surpassed the capacity of most internal creative teams. Creating a 15-second teaser video traditionally requires storyboarding, 3D modeling, animation, and rendering—a process that can take weeks.

With Nano Banana Pro, the bridge between a static image and a motion asset is becoming shorter. By using an image-to-video workflow, a team can take the hero asset they just perfected in the editor and animate it with consistent lighting and textures. This ensures that the visual identity of the launch remains cohesive across different mediums.

However, we must reset expectations regarding video consistency. Current generative video models still face challenges with temporal stability—meaning objects might morph slightly between frames during complex movements. For high-velocity marketing, these tools are excellent for atmospheric backgrounds, product reveals with simple camera pans, and social media teasers. They are not yet a complete replacement for high-end character animation or physics-perfect simulations.

Practical Constraints in High-Velocity Workflows

Transitioning to an AI-driven production cycle isn't as simple as purchasing a subscription. It requires a rethink of asset management and prompt libraries. Teams that succeed are those that treat their prompts and model settings as "source code."

If a product team discovers a specific configuration in Nano Banana that perfectly captures their brand’s aesthetic, that configuration needs to be documented and shared. Without this discipline, the production velocity is lost to "prompt drift," where different team members produce wildly inconsistent results.

Another reality to face is the limitation of model "memory." While tools are getting better at maintaining character or product consistency across multiple generations, they are not yet perfect. If you need a specific, non-existent product prototype to look identical in fifty different lifestyle shots, you will still encounter significant manual work. The "velocity" here applies to the environment and the "vibe," while the product itself often requires a hybrid approach of AI and traditional compositing.

Strategic Implementation for Product Teams

For teams looking to integrate these tools, the most effective path is a staged rollout. Start with "invisible" assets—backgrounds for social posts, textures for UI mockups, or internal presentation visuals. As the team becomes familiar with the AI Image Editor and the nuances of different models, they can move toward more visible hero assets.

The goal is to create a "human-in-the-loop" system. The AI handles the heavy lifting of pixel generation and lighting, while the human designer focuses on composition, brand alignment, and final polish. This division of labor is what allows a single creator to do the work that previously required a small agency.

The Future of Creative Operations

The integration of generative tools into the production pipeline is moving us toward a future where "production time" is no longer a primary constraint on creativity. When you can iterate on a visual concept in real-time, you are free to explore more radical ideas. You can test more variations, respond to market trends faster, and deliver a more personalized experience to your audience.

The shift toward iterative velocity is inevitable. As tools like Banana Pro continue to lower the barrier to entry for high-fidelity content creation, the competitive edge will shift from those who can afford the most designers to those who can iterate the fastest. The technology is a force multiplier, but its true value lies in its ability to reclaim the time lost to the friction of the traditional creative process. By embracing these real-time cycles, product teams can finally align their asset production with the speed of their development sprints.