Google Open-Sources 2M Map Paths to Teach AI Spatial Reasoning

The Problem: AI That Can't Read a Map

Ask an AI model to identify animals in a photo of a zoo — it's effortless. Ask it to trace a path from the entrance to the reptile house on a zoo map — it might draw a line straight through a gift shop or an enclosure.

This gap between visual recognition and spatial reasoning has held back multimodal AI models for years. Google researchers have now tackled it head-on with MapTrace, a new dataset and pipeline that teaches AI the fundamentals of map navigation.

The Root Cause: No Training Data for Spatial Navigation

The problem isn't model architecture — it's data. Current multimodal large language models (MLLMs) learn from billions of images and text descriptions. They learn to recognize what's in a scene. But they almost never see explicit training data that teaches the rules of navigation: that paths must be connected, that you cannot walk through walls, that a route is an ordered sequence of adjacent walkable points.

Hand-labeling map paths at pixel level is painstaking. Annotating at the scale required to train a large model is practically impossible. And many of the best examples — shopping malls, museums, theme parks — are proprietary and can't be collected for research.

The Solution: 2M Synthetic Paths, AI-Verified

Google's answer is a fully automated four-stage pipeline that generates, annotates, and quality-checks map path data at scale — using Gemini 2.5 Pro and Imagen-4 as the core engines:

- Map generation: An LLM generates diverse map prompts — zoos, malls, fantasy theme parks, warehouses — which are then rendered into map images by a text-to-image model.

- Traversable area detection: The system clusters pixels by color to find candidate walkable regions. An AI "Mask Critic" then reviews each candidate and flags whether it represents a realistic, connected path network.

- Graph construction: Valid walkable areas are converted into a navigable graph — nodes at intersections, edges along corridors — making route calculation computable.

- Path generation and validation: Dijkstra's algorithm finds the shortest path between thousands of random start/end pairs on each map. A second AI "Path Critic" reviews the result on the actual map image and approves or rejects based on visual logic.

The result: a dataset of 2 million annotated map image question-answer pairs, now open-sourced on HuggingFace.

The Results: Spatial Reasoning Is a Teachable Skill

Google fine-tuned several MLLMs on just 23,000 paths from the dataset — a small fraction of the full 2M — and tested them on MapBench, a benchmark of real-world maps the models hadn't seen during training.

The improvements were dramatic:

- Gemini 2.5 Flash: Path error (NDTW) dropped from 1.29 to 0.87 — the best overall performance

- Gemma 3 27B: Success rate (valid path produced) rose by 6.4 percentage points, with error dropping from 1.29 to 1.13

The key finding: after training, models weren't just more accurate when they succeeded — they were far less likely to fail completely. Spatial reasoning is not an innate property of language models. It's an acquired skill that can be explicitly taught with the right training data.

What This Unlocks

The applications that become possible with reliable map-reading AI are significant:

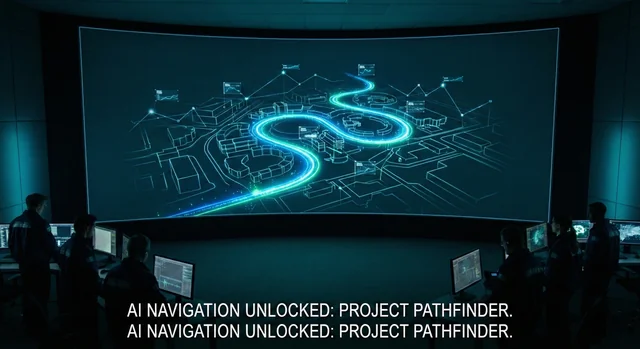

- Navigation tools: AI that can look at a satellite image or a complex subway map and give visually grounded, step-by-step directions

- Robotics: Robots that navigate hospitals, airports, or warehouses by reading a floor plan, without pre-programmed routes

- Accessibility: Tools that can describe a path through a building to a visually impaired person in precise, step-by-step terms

The Bottom Line

Google has open-sourced 2M map path examples and the pipeline to generate more. The research confirms that fine-grained spatial reasoning — one of the last major gaps in multimodal AI — can be closed with synthetic data at scale and AI critics doing the quality control. The data is available on HuggingFace for anyone to use.