DeepSeek Is Previewing a New Model That It Claims Closes the Gap With Frontier AI

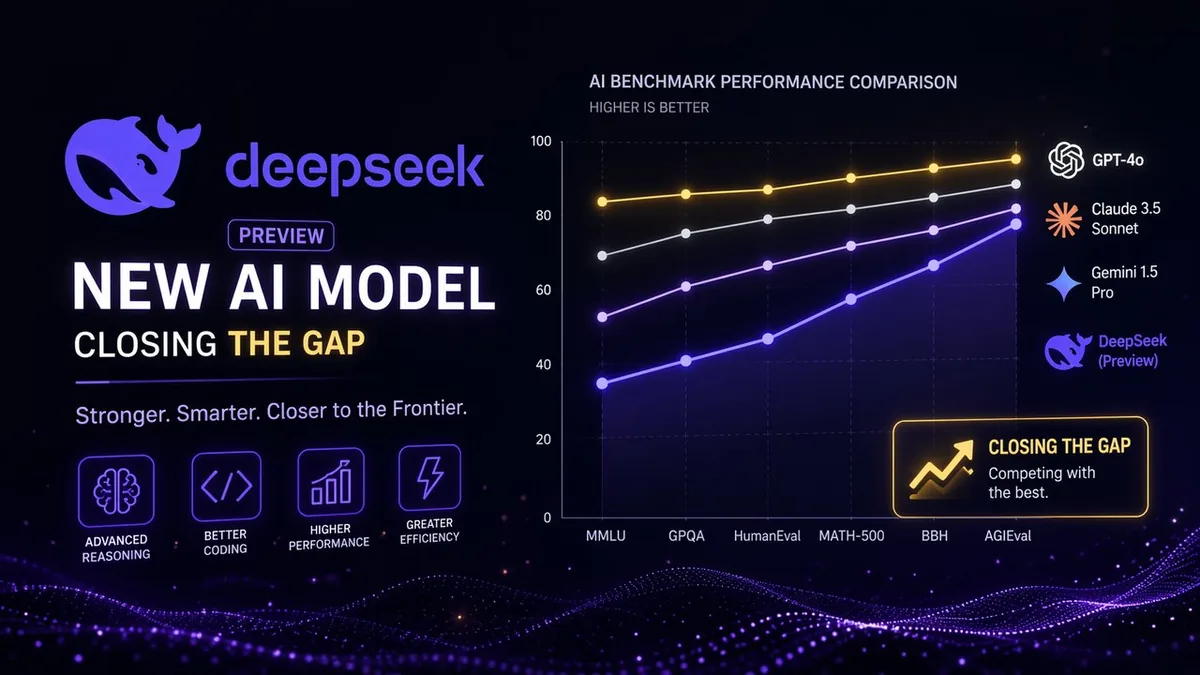

DeepSeek has previewed a new AI model beyond its recently released V4, claiming the upcoming release will close the performance gap with leading frontier models from OpenAI, Anthropic, and Google — a bold claim from a lab that has already upended the industry's assumptions about what's achievable at low cost.

What DeepSeek Is Claiming

The preview is light on technical specifics — no architecture details, parameter counts, or benchmark scores have been released. What DeepSeek is signaling is directional: the next model will perform closer to GPT-5.5 and Claude 4 than any previous DeepSeek release. Given that DeepSeek V4 already topped several major coding benchmarks at a fraction of the inference cost of competing models, closing the remaining gap would represent a significant escalation.

Why This Matters Beyond the Benchmark Race

The significance of DeepSeek's trajectory is not just about model capabilities — it's about the cost structure underneath. DeepSeek V4 used roughly 27% of the compute that comparable US frontier models require for inference. If the next model maintains or improves that efficiency ratio while closing the quality gap, it fundamentally changes the economics of AI deployment for every company not called OpenAI, Anthropic, or Google.

Enterprises that are currently paying premium rates for frontier model API access will have a credible, open-weight alternative with MIT licensing — meaning they can self-host, avoid usage limits, and eliminate per-token costs entirely.

The Geopolitical Dimension

Every DeepSeek release intensifies the debate around US AI export controls. If a Chinese lab can produce frontier-quality models on restricted hardware — which DeepSeek has demonstrated is possible — then the premise of chip export controls as an AI containment strategy comes into question. DeepSeek's next model preview will draw fresh scrutiny from Washington regardless of its technical outcome.

My Take

"Closes the gap" is a marketing phrase until benchmarks say otherwise. But DeepSeek has earned credibility with its previous releases — it has consistently delivered on technical claims that seemed implausible when first announced. The preview alone is significant because it tells the market: the competition isn't over after V4. US frontier labs will not get a rest. And that competitive pressure, more than the specific model capabilities, is what shapes how this industry evolves.

Related Articles

- DeepSeek Just Released V4 With 1.6 Trillion Parameters

- OpenAI Launched GPT-5.5

- Cohere Is Acquiring Aleph Alpha in a $20 Billion Deal