AWS Partners with Cerebras to Deploy Worlds Largest AI Chip for Inference

AWS Taps Cerebras for Lightning-Fast AI Inference

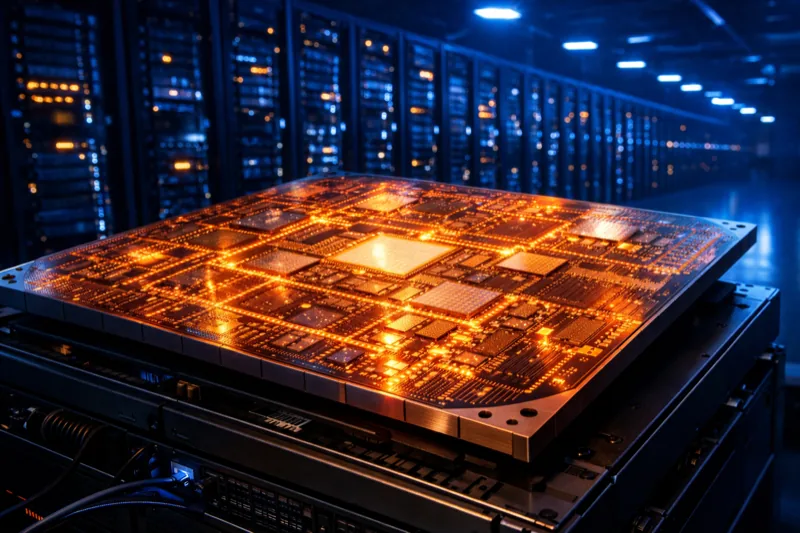

Amazon Web Services has announced a multiyear partnership with Cerebras Systems to deploy the startup's Wafer-Scale Engine (WSE-3) — the world's largest AI processor — inside AWS data centers for AI inference workloads.

The chip is 56 times larger than the largest GPU available today and promises inference speeds that are, according to Cerebras, more than 20 times faster than current solutions.

How the Partnership Works

The collaboration introduces a "disaggregated inference" approach through Amazon Bedrock:

- AWS Trainium handles prefill — the initial processing of input prompts

- Cerebras CS-3 handles decode — the token-by-token generation of responses

By splitting these two computational challenges between specialized hardware, the combined system can deliver significantly faster response times than using a single chip architecture for both tasks.

What This Means for Nvidia

The deal is a clear signal that AWS is looking beyond Nvidia's GPU dominance for AI workloads. While Nvidia GPUs remain the default choice for AI training, inference — which accounts for a growing share of AI compute spending — is emerging as a battleground where alternative architectures can compete.

Cerebras's approach is radically different from Nvidia's. Instead of clustering thousands of small GPUs together, Cerebras builds a single chip from an entire silicon wafer, eliminating the communication overhead between separate processors. Whether this architectural bet pays off at AWS scale remains to be seen.

Timeline and Availability

The new inference service will be available through Amazon Bedrock, launching in the next couple of months with full availability expected in the second half of 2026. AWS will continue offering its own Trainium processors for customers who want slower but cheaper AI computing options.

The Bottom Line

AWS partnering with Cerebras is both a vote of confidence in alternative AI chip architectures and a hedge against Nvidia's pricing power. The "20x faster" claim is eye-catching, but real-world performance on production workloads will be the true test. For now, this is AWS signaling that the AI chip market is far from settled — and that Nvidia's monopoly on AI compute may have an expiration date.