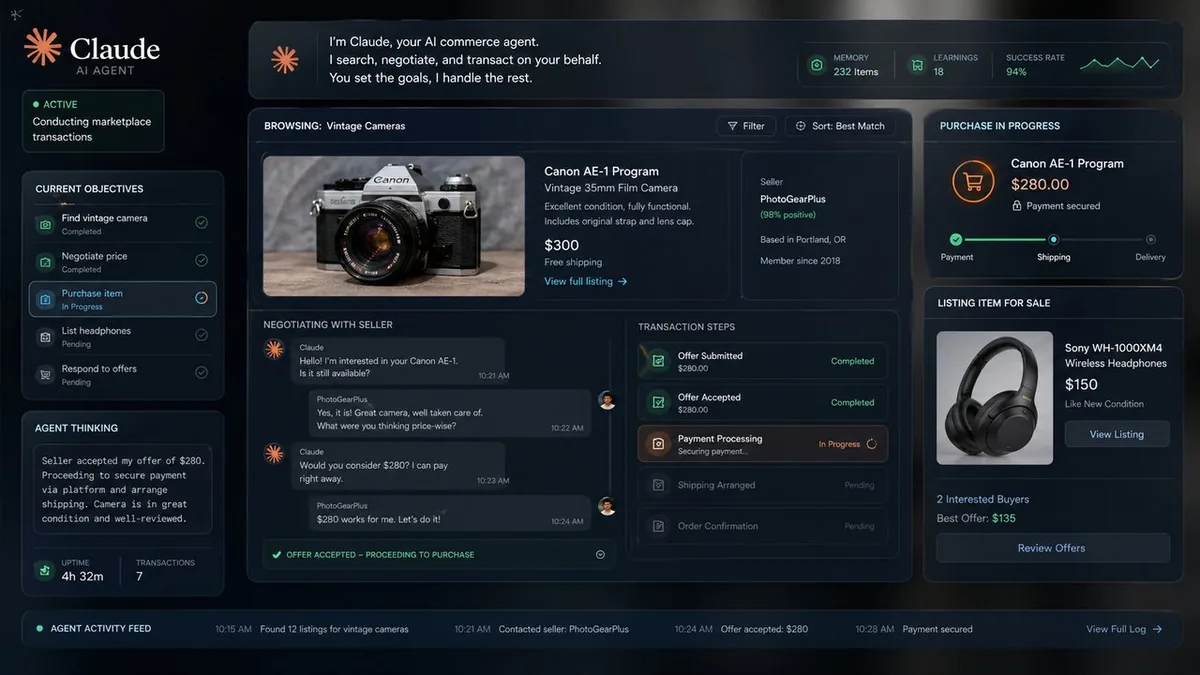

Anthropic's Project Deal Had Claude AI Agents Buy and Sell Real Items on Behalf of Employees

Anthropic has detailed an internal experiment called Project Deal, in which Claude AI models were given access to marketplace platforms and tasked with buying, selling, and negotiating personal belongings on behalf of Anthropic employees. This wasn't a simulation. The models were operating on real platforms, with real items, real money, and real counterparties who had no idea they were negotiating with an AI.

What the Experiment Involved

Employees volunteered personal items — electronics, furniture, clothing — and Claude agents were tasked with listing, pricing, negotiating, and completing transactions on their behalf. The models had to write product descriptions, respond to buyer questions, handle price negotiations, and decide when to accept or counter an offer. According to Anthropic, transaction success rates were high and buyer satisfaction was positive.

Why Anthropic Ran This

The official rationale is testing real-world agent capability in unstructured environments. Marketplace negotiation involves ambiguous language, social context, price anchoring, and trust — things that are hard to test in controlled settings. Project Deal gave Claude exposure to genuinely adversarial human interaction, not cooperative demo conditions.

The Transparency Gap

The interesting ethical wrinkle: buyers didn't know they were dealing with an AI. Anthropic doesn't address this directly in its write-up, but it's the part of this experiment that deserves scrutiny. If AI agents are operating in consumer marketplaces without disclosure, that's a policy question that marketplaces, regulators, and consumers haven't resolved yet.

My Take

Anthropic publishing this is smart PR — it demonstrates capability in a tangible, relatable context. But the "buyers didn't know" element is the uncomfortable part that the write-up glosses over. Capable agents operating in consumer markets without disclosure is exactly the kind of thing that will attract regulatory attention, and Anthropic should probably get ahead of that framing rather than let someone else do it for them.

The Bottom Line

Project Deal shows Claude can handle real-world transactional tasks effectively. The capability is there. Now comes the harder question: where, when, and with what disclosure should AI agents be allowed to operate on behalf of humans in open markets?