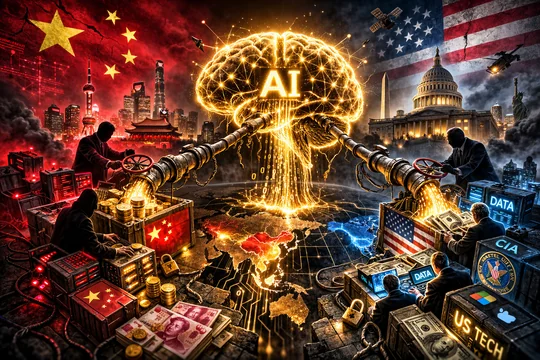

Anthropic Accuses DeepSeek, Moonshot, and MiniMax of Stealing Claude Data

Anthropic has accused three Chinese artificial intelligence companies — DeepSeek, Moonshot AI, and MiniMax — of systematically stealing its AI capabilities by using more than 24,000 fake accounts to extract data from its Claude models through a technique called distillation.

According to Anthropic, the scale of the operation was staggering. MiniMax generated more than 13 million exchanges with Claude, specifically targeting agentic coding, tool use, and orchestration capabilities. Moonshot AI conducted more than 3.4 million exchanges, while DeepSeek carried out over 150,000 exchanges — all through fraudulent accounts designed to bypass Anthropic's terms of service.

What Is AI Distillation?

Distillation is a well-established AI training technique where the outputs of a large, powerful model are used to train a smaller, more efficient one. While AI companies commonly use it on their own models, Anthropic alleges these Chinese labs used it to essentially copy Claude's capabilities without authorization — feeding its responses into their own training pipelines to replicate its most advanced features.

Anthropic says the targeted capabilities were deliberate: the labs focused on Claude's most differentiated features — agentic reasoning, tool use, and coding — areas where Claude leads the market.

National Security Implications

Anthropic's accusations come with serious national security warnings. The company argues that distilled models stripped of safety guardrails pose significant risks. Unlike Claude, which is built with protections against misuse, these distilled copies lack the safeguards designed to prevent state and non-state actors from using AI to develop bioweapons or conduct malicious cyber operations.

Anthropic warns that foreign laboratories using distilled American AI models could feed unprotected capabilities into military, intelligence, and surveillance systems — enabling authoritarian regimes to deploy frontier AI for offensive purposes, disinformation campaigns, and mass surveillance.

Timing: The US AI Chip Export Debate

The allegations land at a particularly sensitive moment. The US government is actively debating export controls on advanced AI chips to China. Anthropic's disclosure adds a new dimension to the conversation: if Chinese labs can extract frontier AI capabilities through API access rather than hardware, chip restrictions alone may not be sufficient to maintain America's AI edge.

The real vulnerability, the accusations suggest, isn't just in silicon — it's in model access.

The Companies Named

- DeepSeek — the Chinese AI lab that recently made global headlines for releasing models that rivaled Western frontier models at a fraction of the cost

- Moonshot AI — a prominent Chinese AI startup backed by major investors

- MiniMax — a Chinese multimodal AI company focused on agentic and coding capabilities

All three represent significant players in China's rapidly advancing AI ecosystem, making Anthropic's accusations particularly significant in the context of US-China tech competition.

What This Means for AI Security

This case highlights a growing challenge for AI companies: protecting not just their model weights, but their model behaviors. As frontier models become accessible via API, the risk of capability extraction through distillation becomes a systemic threat — one that no amount of hardware export controls can fully address without robust API-level monitoring and enforcement.

Anthropic's move to publicly name these companies signals a shift toward treating AI capability theft as a serious offense with real-world consequences — not just a terms-of-service violation.