Alibaba Releases Qwen3.6-35B-A3B, an Open-Weight AI Model That Rivals Larger Dense Models

Alibaba's Qwen team has released Qwen3.6-35B-A3B, an open-weight mixture-of-experts (MoE) language model with 35 billion total parameters and 3 billion active parameters at inference time. Despite activating only a fraction of its total parameters for any given input, the model delivers benchmark performance that rivals significantly larger dense models — particularly in agentic coding tasks. The model is available on Hugging Face and ModelScope under an open license.

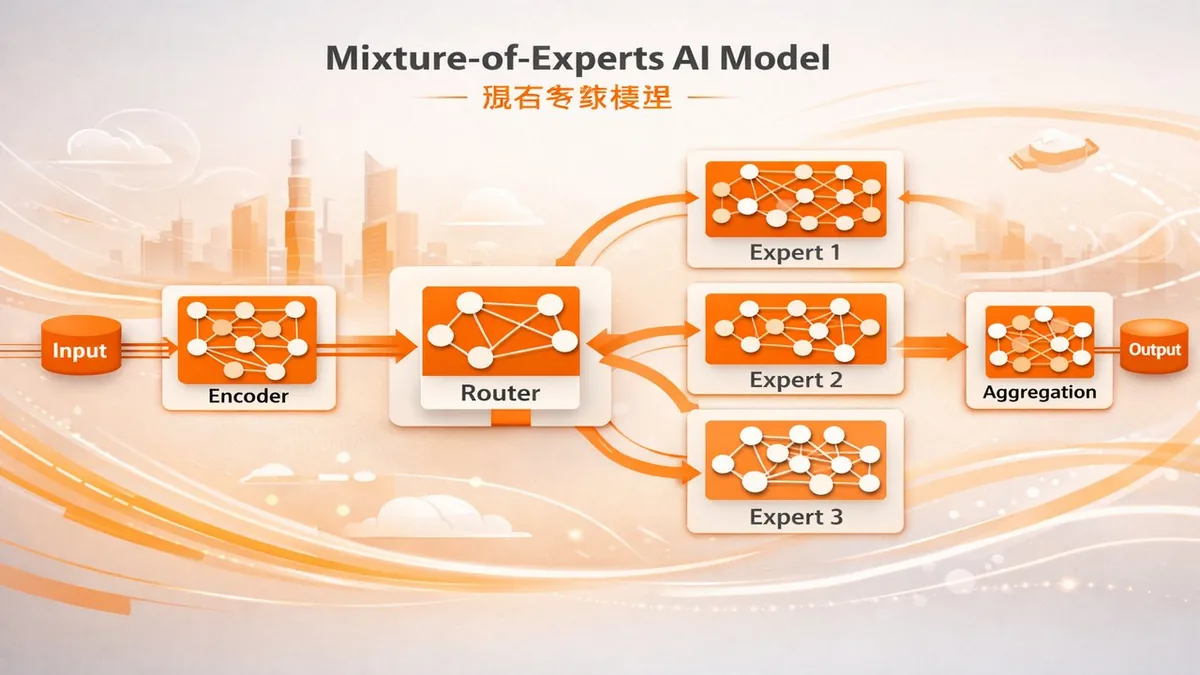

What Mixture-of-Experts Architecture Means

In a mixture-of-experts model, the full parameter count is distributed across many specialized "expert" sub-networks. For any given input, a router selects only a subset of experts to activate — in Qwen3.6-35B-A3B's case, roughly 3 billion of 35 billion total parameters. This means the model runs inference at the compute cost of a 3B model while drawing on the learned representations of a 35B model. The efficiency gain is significant: developers running the model locally or on modest cloud infrastructure can access a level of capability that would otherwise require much larger compute budgets.

Performance on Agentic Coding Tasks

Alibaba specifically highlights Qwen3.6-35B-A3B's performance on agentic coding benchmarks — tasks that involve multi-step reasoning, tool use, and sustained context across long sequences. The model is competitive with larger dense models including some frontier closed-source models on these benchmarks. For developers building AI coding agents or software that requires extended reasoning over complex codebases, this represents a meaningful capability at open-weight availability — meaning it can be fine-tuned, run locally, and deployed without per-token API costs.

The Open-Weight AI Landscape

Alibaba's Qwen series has become one of the most capable open-weight model families available, alongside Meta's Llama series, Mistral's models, and Google's Gemma. The release of Qwen3.6-35B-A3B continues Alibaba's pattern of releasing models that are competitive with leading closed-source models at the time of release — a strategy that builds developer adoption and establishes Alibaba as a foundational AI research organization outside China's borders. The MoE architecture specifically addresses the hardware cost barrier for researchers and companies that cannot afford to run 70B+ dense models.

Local Deployment Implications

At 3 billion active parameters, Qwen3.6-35B-A3B can run on consumer hardware with a modern GPU — making it accessible to individual researchers, small companies, and developers in regions where API access to frontier models is restricted or expensive. The 3B active parameter figure means memory requirements are manageable: a machine with 16–24GB of VRAM can run the model comfortably. This positions it as a practical choice for applications that require on-device inference for privacy, latency, or cost reasons.

The Bottom Line

Qwen3.6-35B-A3B is Alibaba's strongest open-weight release to date for agentic use cases. The MoE architecture makes it efficient enough to deploy at scale while delivering capabilities that compete with much larger models. For the open-source AI community, it raises the capability floor for what's achievable without proprietary APIs — and continues the competitive pressure on closed-source model providers to justify their pricing relative to increasingly capable free alternatives.

Related Articles

- Alibaba Releases Happy Oyster AI World Model for 3D Environments

- ByteDance Launches Seedance 2.0 Video Model to Enterprise

- Anthropic Releases Claude Opus 4.7