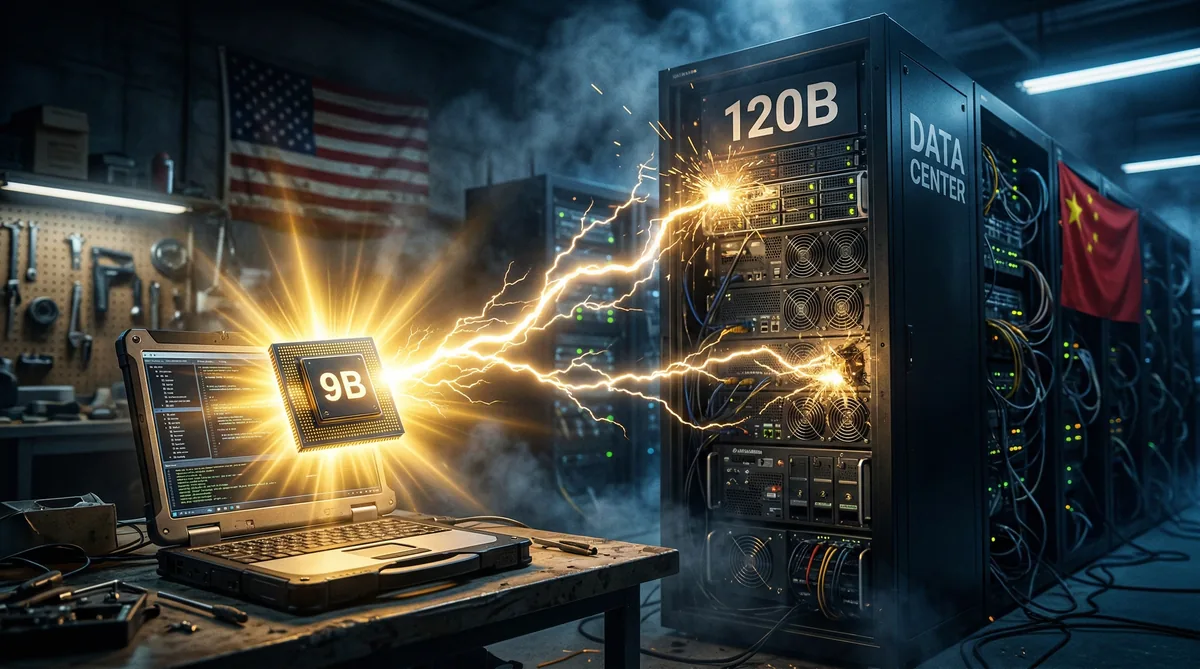

Alibaba's Qwen3.5-9B Beats OpenAI's 120B Model — And Runs on a Laptop

Alibaba's Qwen Team has released the Qwen3.5 Small Model Series — a family of open-weight AI models in 0.8B, 2B, 4B, and 9B sizes that punch far above their weight. The headline claim: the 9B model outperforms OpenAI's gpt-oss-120B on several key benchmarks, despite being 13.5x smaller. And it runs on a standard laptop.

The Numbers That Matter

The benchmark results are striking:

- Graduate-level reasoning (GPQA Diamond): Qwen3.5-9B scores 81.7, beating gpt-oss-120B's 80.1

- Visual reasoning (MMMU-Pro): 70.1, outperforming even Gemini 2.5 Flash-Lite (59.7)

- Video understanding (Video-MME): 84.5, significantly ahead of Flash-Lite's 74.6

- Math (Harvard-MIT Tournament): 83.2 for the 9B, 74.0 for the 4B

- Multilingual knowledge (MMMLU): 81.2, beating gpt-oss-120B's 78.2

The 4B model is nearly as capable as the previous generation's 80B model. That's not incremental progress — it's a generational leap in efficiency.

What Makes It Different

The technical architecture is a departure from standard Transformers. Alibaba uses a hybrid approach combining Gated Delta Networks (linear attention) with sparse Mixture-of-Experts (MoE). This breaks through the "memory wall" that typically limits small models, achieving higher throughput with significantly lower latency.

The models are also natively multimodal — trained using early fusion on multimodal tokens rather than bolting a vision encoder onto a text model. The 4B and 9B can read UI elements, count objects in video, and perform visual reasoning at a level previously requiring 10x larger models.

Open Source Under Apache 2.0

All models are released under Apache 2.0 licenses, available on Hugging Face and ModelScope. This means full commercial use, customization, and deployment — no restrictions. The 4B model supports a 262,144 token context window, making it suitable for processing entire codebases or long documents.

Why This Matters

The "more intelligence, less compute" mantra is becoming real. When a 9B model running on a laptop can beat a 120B model requiring data center infrastructure, the economics of AI shift dramatically. Edge deployment, mobile AI, and offline capabilities become viable for capabilities that previously required cloud APIs.

For developers, this means powerful AI that's truly local-first — no API costs, no latency, no data leaving the device.

The Bottom Line

Alibaba's Qwen3.5 small models are a stark reminder that the AI race isn't just about building bigger. While US companies chase trillion-parameter flagships, Chinese labs are proving that architectural innovation can deliver equivalent intelligence in a fraction of the size. The 9B beating OpenAI's 120B isn't just a benchmark win — it's a philosophical statement about the future of AI: smaller, faster, open, and running everywhere.