AI in Healthcare: Why Nurses Are Warning Hospitals About Patient Safety Risks

AI in Hospitals: Why New York Nurses Are Sounding the Alarm—and Why Every Healthcare Leader Should Pay Attention

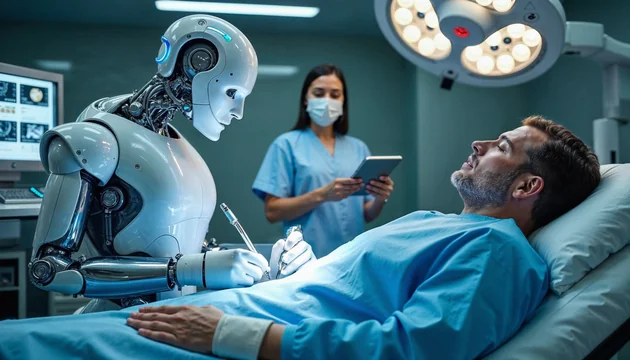

Artificial intelligence has been racing into healthcare, promising faster decisions, reduced costs, and improved patient outcomes. But while tech vendors and hospital executives celebrate this digital leap, many frontline nurses in New York City are raising urgent red flags.

Their message is blunt: the rollout isn’t just fast—it’s reckless.

And if the concerns they’re voicing today aren’t addressed, hospitals nationwide may soon face the same backlash.

In this article, we break down what’s really happening beneath the surface, why nurses feel blindsided, and what the next generation of AI-driven healthcare must get right.

The Core News: Nurses Say AI Is Being Deployed Without Them — and at Their Expense

According to recent reporting, nurse leaders across New York claim hospital systems are deploying AI-powered tools without meaningful consultation or training for the people who actually deliver bedside care.

Nurses shared troubling examples—such as unexpected monitoring devices appearing in ICUs without warning or instruction—highlighting the widening disconnect between frontline staff and administrative decision-makers. Concerns are especially sharp at major networks such as Mount Sinai and Maimonides, where nurses fear that AI systems designed to “assist” may ultimately replace or dilute the expertise of trained professionals.

Leaders like Nancy Hagans of the New York State Nurses Association argue that introducing AI without nurse participation undermines both safety and trust. The union has pushed for stronger guardrails in contract negotiations, emphasizing that AI should support—not supplant—human judgment.

Why This Matters: AI Adoption Is Colliding With Real-World Clinical Complexity

This moment is bigger than one hospital system. It highlights a nationwide tension:

Healthcare AI is accelerating faster than healthcare culture can adapt.

Executives see AI as:

-

A cost-saving mechanism

-

A tool for workflow automation

-

A pathway to precision care

But nurses see:

-

Increased workload (because human review is still required)

-

Reduced oversight

-

Technology rolled out without training

-

Devices making decisions without context

-

Potential bias encoded into clinical recommendations

This disconnect isn’t about resisting innovation. It’s about responsible integration.

Healthcare is not a software environment where rapid iteration is safe. When things break, the consequences are measured in patient lives—not user churn or slow load times.

The Bigger Picture: What This Reveals About AI’s Growing Pains in Medicine

1. Hospitals Are Prioritizing Tech ROI Over Staff Readiness

Budgets are shifting dramatically toward digital transformation, often without equal investment in human training. Nurses feel the change immediately—they’re the ones validating AI’s output, correcting errors, and double-checking machine-driven recommendations.

2. AI Vendors Are Entering Care Spaces Faster Than Regulation Can Keep Up

Some systems appear to be piloting devices or software directly on patients without comprehensive oversight. If this trend scales, expect federal scrutiny to intensify—especially from agencies focused on medical device safety and patient rights.

3. The Human Element Is Still Irreplaceable in High-Stakes Care

Even the most advanced AI cannot replicate holistic assessments such as:

-

Reading a patient’s emotional state

-

Noticing changes in breathing patterns

-

Catching subtle inconsistencies machines miss

-

Understanding when data conflicts with reality

Technology can calculate.

Only humans can care.

Our Take: AI Can Transform Healthcare — But Only if Nurses Are Architects, Not Afterthoughts

For AI to genuinely elevate patient outcomes, hospitals must rethink how they introduce and scale these tools.

What needs to happen next:

1. Co-Design With Clinicians

Nurses and bedside teams must be part of product selection, testing, and implementation—not merely informed after the fact.

2. Transparent Protocols and Real Training

No device or AI assistant should reach a patient’s bedside without clear workflows, accountability structures, and comprehensive staff training.

3. AI Governance Committees Should Be Standard

Hospitals need interdisciplinary review boards to evaluate all new digital tools for accuracy, bias, safety, and ethical compliance.

4. AI Should Automate Tasks, Not Clinical Judgment

Charting? Scheduling? Predictive alerts?

Great.

Replacing hands-on assessment or decision-making?

A hard no.

The lesson here isn’t to slow down innovation—it’s to elevate implementation.

The healthcare systems that get this right will lead the industry for decades.

Conclusion: The Future of Healthcare Requires Collaboration, Not Technology Alone

AI is reshaping medicine, but bedside clinicians are the backbone of patient care. If hospitals push forward without their expertise, they risk undermining not only safety but staff morale and public trust.

New York nurses aren’t rejecting AI—they’re demanding a seat at the table.

And if healthcare leaders are wise, they’ll pull up a chair.