AI Deepfakes Are Out of Control: What the Whirlpool Video Scandal Really Means

When Deepfakes Cross the Line: What a North Carolina Senator's Altered Video Teaches Us About AI Ethics, Corporate Responsibility & the Future of Trust

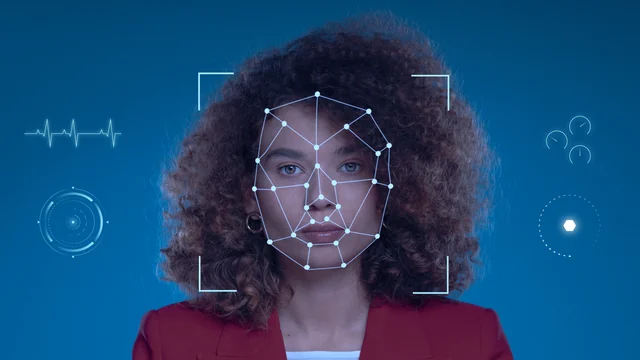

Artificial intelligence has unlocked extraordinary potential — but it has also opened the door to an era where seeing is no longer believing. A recent revelation involving North Carolina state senator DeAndrea Salvador is the latest example of how far AI manipulation can go and how unprepared most organizations still are for its ethical and legal consequences.

In this case, a deepfake didn’t target a celebrity or a political smear campaign. It targeted a real public servant whose TED Talk footage was quietly repurposed into a high-profile, award-winning advertisement — without her knowledge, permission, or control.

And that should concern all of us.

The Incident: How a TED Talk Became an Unauthorized Whirlpool Ad

According to the original Washington Post report, Salvador discovered in June that a clip from her 2018 TED Talk had been altered using AI and embedded into a Brazilian Whirlpool advertising campaign.

The marketers kept her face, her outfit, her gestures — even the TED stage. But they changed her voice, changed her words, and changed her data slides, making it appear that she was advocating for a Whirlpool financing initiative aimed at low-income residents in São Paulo.

The ad went on to win top honors at the Cannes Lions Festival, the advertising industry's most prestigious stage.

Only later did Salvador learn her likeness had been appropriated to help sell appliances.

Why This Case Matters Much More Than One Altered Video

This deepfake controversy is not just about intellectual property or a single misleading advertisement. It exposes three powerful trends that brands, creators, policymakers, and everyday citizens need to understand.

1. Deepfake Technology Is Now Cheap, Fast, and Nearly Undetectable

You no longer need Hollywood-level resources to generate believable video manipulation. A single photo, a few seconds of audio, or an existing speech clip is enough to recreate a person’s likeness.

Experts warn that shadow AI — unauthorized AI projects done by employees without oversight — is becoming common inside corporations. That seems to be exactly what happened here.

This makes corporate vulnerability skyrocket.

2. Non-Celebrities Are Becoming Prime Targets

When most people think “deepfake victim,” they picture a celebrity. But this case highlights the reality:

Anyone with an online presence is at risk — professionals, founders, journalists, lawmakers, you name it.

As one expert in the original report noted, people like Salvador are particularly vulnerable because the general public doesn’t know their real voice or mannerisms well enough to spot manipulations.

This has severe implications for:

-

Public trust

-

Corporate brand reputation

-

Political misinformation

-

Online identity safety

3. Corporate Oversight of AI Is Now a Legal & Ethical Imperative

Whirlpool and creative agency Omnicom argued they did not intentionally mislead audiences. But that’s exactly the point:

If a global brand can release an award-winning ad without realizing it contains deepfake content, the oversight systems are fundamentally broken.

Brands must now ask:

-

Do we have AI verification policies?

-

Who audits the AI-generated content we publish?

-

Are our agencies or subcontractors using undisclosed AI tools?

-

How do we protect ourselves from liability?

The companies involved later apologized and returned their Cannes awards, but the damage was already done — to both the public's trust and the senator’s reputation.

The Bigger Picture: The Coming Wave of AI Identity Theft

Deepfake misuse won’t stay confined to marketing videos. Here’s what’s likely next:

• AI impersonations in political campaigns

We are already seeing fake robocalls, fabricated speeches, and AI-generated scandals.

• Fraud targeting small businesses and entrepreneurs

Fake video meetings and voice clones can authorize fund transfers or contracts.

• Manipulated corporate training or internal communications

Imagine receiving a video “from your CEO” that isn’t real.

• Reputation attacks on everyday individuals

College students, job applicants, and professionals can be digitally framed with ease.

This case is a warning shot across multiple industries.

Our Take: What Needs to Happen Next

To navigate the AI era responsibly, three major shifts are needed:

1. Mandatory AI Transparency in Corporate Content

Brands should be required to disclose when AI was used to generate or modify media. If food companies must label ingredients, AI-produced content should be no different.

2. Stronger Legal Protections for Personal Likeness

Right now, the law is playing catch-up. Public figures need modern protections against having their face or voice repurposed without consent.

3. An Internal “AI Governance” Framework for Every Company

This should include:

-

AI approval workflows

-

Vendor disclosure requirements

-

Employee training on ethical AI use

-

Periodic audits

-

Strict rules for using real people’s likeness

Companies cannot assume agencies or partners are playing by the rules.

The Human Side: Salvador’s New Reality

Beyond the headlines, this incident left Salvador more cautious about speaking publicly — a deeply concerning outcome for any democracy or public sphere.

If leaders begin self-censoring out of fear of being digitally manipulated, innovation, advocacy, and public dialogue suffer.

We cannot allow AI misuse to have that chilling effect.

Conclusion: Deepfakes Are Not Just a Tech Problem — They’re a Trust Problem

The altered TED Talk incident should serve as a wake-up call. AI presents enormous opportunities, but without guardrails, even well-intentioned organizations can inadvertently undermine trust.

The responsibility now falls on:

-

Lawmakers to modernize privacy and likeness laws

-

Companies to adopt strict AI governance

-

Creators and consumers to stay vigilant about authenticity

-

Tech leaders to build safeguards into AI tools

The technology will only grow more powerful. Our systems — ethical, legal, and corporate — must grow with it.